How to Build Your Own LLM Benchmark

Using real-world exams and task-specific evaluations to test what public benchmarks miss

This publication is by members of the Algorythm Community. A network of 20k+ black software engineers sharing technical insights across all fields of software development. Join us on LinkedIn, Facebook and Subscribe for more insights.

Most LLM benchmarks are broken not because the questions are bad, but because the industry treats them as proof of capability when they’re actually proof of optimization. A model that scores 90% on MMLU has demonstrated one thing: it’s good at MMLU. That number tells you almost nothing about whether it can handle your task, your users, or your domain.

It gets worse. Labs actively tune against public benchmarks. Training data gets curated to maximize leaderboard positions. The scores go up. The real-world performance stays flat. Benchmarks aren’t failing because they’re poorly designed. They’re failing because they’ve become marketing artifacts.

The fix is straightforward: stop relying on someone else’s eval and build your own. The performance that matters is performance on your task, in your context, for your users. Everything else is noise.

In this article, I walk through the full process from choosing an evaluation source to structuring a dataset, selecting models, and designing a scoring framework. I use the West African Senior School Certificate Examination (WASSCE) as the case study: a high-stakes regional exam taken by over 2 million students every year, many of whom already turn to AI tools for help. It’s a useful example for anyone who wants to evaluate models against real-world knowledge rather than relying solely on public benchmarks.

But the framework applies to any domain. Medical licensing exams. Legal bar questions. Industry-specific certifications. Internal company assessments. If you have a corpus of questions with known correct answers that represent your use case, you can build an eval.

Why Public Benchmarks Are Misleading You

MMLU, GPQA, HumanEval, GSM8K; these have become the standard scorecards for every LLM release. They’re treated as definitive. They’re not.

They’re being overfit. When every model release is tuned to maximize performance on the same benchmarks, the scores stop meaning what you think they mean. Labs don’t just train models, they train models to win leaderboards. High MMLU increasingly tells you how well a lab optimizes for MMLU, not how capable the model is on novel tasks.

They over-represent one slice of the world. AP exams, college-level science, competition math. All drawn from American and European academic traditions. If your users aren’t in that distribution, you’re evaluating against someone else’s reality.

They don’t test what you actually need. If you’re building an education platform for West African students, a legal research tool for Brazilian attorneys, or a diagnostic assistant for rural healthcare workers, the questions that matter aren’t in any public benchmark. Full stop.

Relying on public benchmarks to make product decisions is like hiring someone based on their SAT score. It tells you something. It doesn’t tell you enough. Here’s how to build the eval that does.

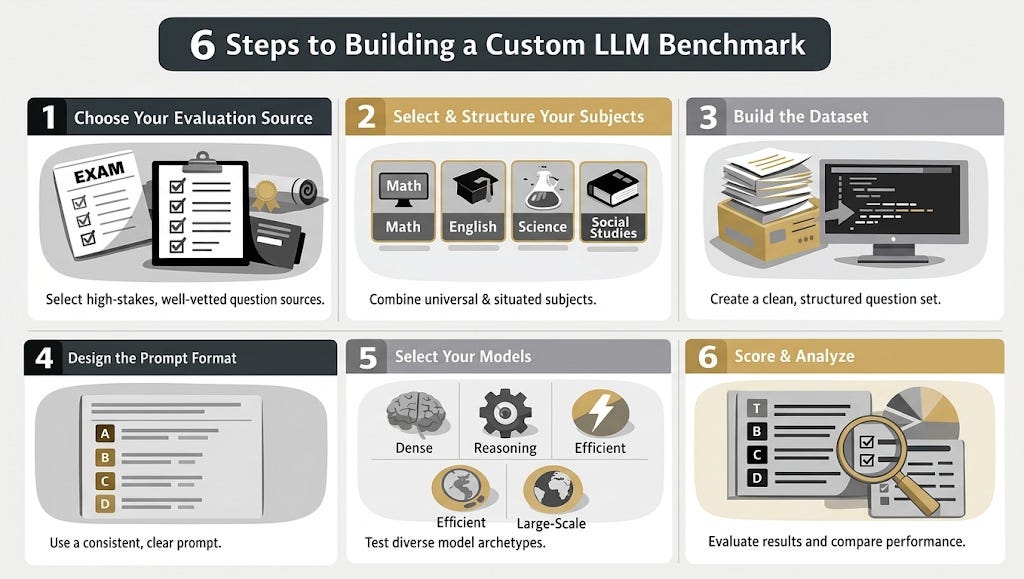

Step 1: Choose Your Evaluation Source

The foundation of any custom benchmark is a structured corpus of questions with verified correct answers. The best sources share three properties: they’re high-stakes (so the questions are well-vetted), they have clear right answers (so scoring is unambiguous), and they represent the knowledge domain you actually care about.

Good candidates: standardized exams (national or regional), professional certification tests, internal company assessments, and university-level subject exams.

What to avoid: Do not use scraped question banks without verified answer keys you’ll be measuring noise. Avoid subjective or free-response questions unless you have a reliable grading pipeline (human or model-based). And stay away from question sets smaller than 200 questions per subject. Below that threshold, your results will be too noisy to draw real conclusions.

The WASSCE example: Every year, over 2 million candidates across five West African countries; Ghana, Nigeria, Sierra Leone, The Gambia, and Liberia sit for the West African Senior School Certificate Examination. It covers a standardized curriculum, has published marking schemes, and is the single most consequential exam in the region, gating university admission for an entire generation.

I took this exam at Presbyterian Boys’ Secondary School (Presec Legon) in Accra, Ghana. I know the content, I know the stakes, and I know that nobody in the AI evaluation space is testing models against it. That gap is exactly the opportunity.

WASSCE also offers something most benchmarks can’t: a natural gradient between universal knowledge and regionally situated knowledge. Some subjects test the same physics and algebra you’d find anywhere. Others require knowledge of ECOWAS trade policy, the 1992 Ghanaian constitution, or the ecological zones of the Sahel. That gradient lets you measure not just whether a model is capable, but where its knowledge actually comes from.

Step 2: Select and Structure Your Subjects (The Situated Evaluation Framework)

This is the most important design decision in your benchmark, and most people get it wrong by testing only one type of knowledge.

I call this the Situated Evaluation Framework: every custom benchmark should include both universal subjects (where you’d expect models to perform well regardless of training distribution) and situated subjects (where performance depends on how well the training data represents your specific domain).

The universal subjects are your control group. They establish a baseline: “this model is generally capable.” The situated subjects are your stress test. They answer the question that actually matters: “is this model capable for my users?”

The spread between them is the signal. A model that scores 85% on your universal subjects and 45% on your situated ones doesn’t have a general capability problem. It has a training data problem. That distinction changes everything about how you respond — you don’t need a bigger model, you need better data or fine-tuning.

The WASSCE example — four core subjects:

Core Mathematics (Universal Control)

50 multiple-choice questions covering algebra, geometry, trigonometry, statistics, and number theory. This is the control group; the content is largely universal. If a model can handle GSM8K or MATH, it should perform well here. If it doesn’t, that tells us something about question formatting or curriculum-specific conventions rather than knowledge gaps.

English Language (Cultural Fluency Test)

Comprehension passages, vocabulary in context, grammar, sentence construction, and an oral English section tested via written proxies like stress and intonation patterns. The comprehension passages are often drawn from West African literary or cultural contexts. A model trained predominantly on American or British English corpora might parse the grammar fine but miss the contextual cues. This subject tests cultural fluency, not just linguistic competence.

Integrated Science (Applied Regional Knowledge)

Biology, chemistry, physics, and earth science; but with an applied, West African lens. Questions reference local agricultural practices, tropical diseases, regional ecosystems, and public health issues relevant to the subregion. This is where you start to see whether a model’s “science knowledge” is truly general or just Western-general.

Social Studies (Maximum Regional Specificity)

This is the subject most likely to break models. Social Studies on WASSCE covers governance structures in West Africa, the role of ECOWAS, environmental sustainability in the Ghanaian context, youth and national development, and cultural practices across the subregion. A model might ace AP US Government and have no idea what the Directive Principles of State Policy are in the 1992 Constitution of Ghana.

This is the real test of whether training data represents the world or just part of it.

How to apply this to your domain: Pick 3-5 subjects or topic areas. At least one universal control, at least one situated stress test. If every subject in your benchmark is situated, you have no baseline. If every subject is universal, you’re rebuilding MMLU. You need the gradient.

Step 3: Build the Dataset

This is the most labor-intensive step, and the one that produces the most lasting value. Your goal is a clean, machine-readable, structured dataset that can be run through any eval harness.

The sourcing pipeline:

Collect source material — past papers, question banks, or assessment archives in whatever format they exist (PDF, image, print)

Extract and digitize — OCR, manual transcription, or a combination

Verify against answer keys — cross-check with official marking schemes or expert review

Structure into a standard format — JSON is the most common for eval frameworks

A single question entry might look like:

{

“subject”: “Social Studies”,

“year”: 2023,

“question_number”: 15,

“question”: “The main objective of ECOWAS is to promote”,

“options”: {

“A”: “military cooperation among member states”,

“B”: “economic integration among member states”,

“C”: “cultural exchange programs in West Africa”,

“D”: “political unification of West African states”

},

“correct_answer”: “B”

}A note on data availability: If you’re working with educational data from regions outside the US or Europe, structured, machine-readable datasets are rare. Part of the value of projects like this is building that artifact. The dataset itself becomes a contribution.

For well-resourced domains (US medical licensing, bar exams, etc.), you may find existing structured datasets. But even then, building your own gives you control over quality, scope, and format.

Step 4: Design the Prompt Format

Keep it simple. For multiple-choice evaluations, zero-shot prompting with a clear instruction format is the cleanest baseline:

The following is a multiple choice question from [Exam Name] in [Subject].

Question: [question text]

A. [option A]

B. [option B]

C. [option C]

D. [option D]

Answer with just the letter of the correct option.Zero-shot measures the model’s baseline knowledge without in-context learning crutches. This is what you want for an initial benchmark. Few-shot variants (providing example questions and answers before the test question) are worth exploring in follow-up work to measure how quickly models can adapt.

Why this matters: The prompt format directly affects results. If your prompt is ambiguous, you’re benchmarking prompt sensitivity, not knowledge. Keep it clean, keep it consistent across all subjects and models.

Step 5: Select Your Models

Don’t just run the biggest model available. The goal is to test hypotheses, not collect trophies.

Pick one model from each of four archetypes:

The Dense Baseline — a standard, well-understood model with strong general capability. This is your reference point. (e.g., DeepSeek-V3-0324, Llama-3.3-70B-Instruct, GPT-OSS-120B)

The Reasoning Specialist — a model optimized for chain-of-thought or extended thinking. Does explicit reasoning help on your situated content, or only on math and logic? (e.g., DeepSeek-R1-0528, Kimi-K2-Thinking)

The Efficient Model — a smaller or mixture-of-experts (MoE) architecture. MoE models activate only a subset of their parameters per token, trading total size for speed and cost. This tests whether you can get comparable results at lower compute. (e.g., Nemotron-3-Nano-30B, Gemma-3-12b-it)

The Multilingual/Large-Scale Entry — a model with broader training data or massive scale. Tests whether more data or more parameters close the gap on situated knowledge. (e.g., Qwen3-235B-A22B-Instruct, Nemotron-3-Super-120B)

The WASSCE example: I’m running all nine models listed above across the four core subjects. The specific hypotheses: Does Qwen3’s multilingual pretraining give it an edge on West African Social Studies? Does DeepSeek-R1’s reasoning help on situated content, or only on Maths? Does Gemma at 12B hold up against models 10x its size on universal subjects?

All of these models are available on Crusoe Intelligence Foundry via managed inference — one API, no GPU management. Run the full eval without provisioning a single machine.

Step 6: Score and Analyze

For multiple-choice: accuracy (correct / total) per subject, per model. But raw accuracy is just the starting point. The interesting analysis comes from the comparisons.

Cross-subject analysis: Do models uniformly degrade across your situated subjects, or is the drop concentrated in specific areas? If every model tanks on Social Studies but handles Maths fine, that’s a training data gap. If some models handle the transition better than others, that’s an architecture or training insight.

Question-type analysis: Within a subject, which topics trip models up? Constitutional history vs. arithmetic? Tropical agriculture vs. basic chemistry? This granularity tells you exactly where models need improvement for your use case.

Architecture effects: Do MoE models with fewer active parameters perform differently than dense models of comparable total size? This matters if you’re choosing models for deployment on constrained infrastructure.

Reasoning vs. baseline: Does explicit chain-of-thought (DeepSeek-R1, Kimi-K2-Thinking) actually help on culturally situated questions, or does it only improve performance on math and logic? If reasoning tokens don’t help on Social Studies, that tells us the gap is in knowledge, not in reasoning ability.

Common Mistakes That Will Wreck Your Benchmark

I’ve seen enough eval projects go sideways to know where the landmines are. Avoid these.

Dataset leakage. If your eval questions appeared in the model’s training data, your benchmark is measuring memorization, not capability. This is the single most common failure mode. Use questions from recent exam years, check for contamination, and if in doubt, hold back a subset of questions that you never publish.

Prompt inconsistency. If you change the prompt format between subjects or models, you’re benchmarking prompt sensitivity, not knowledge. Lock the format. Use the exact same template for every question, every model, every run.

Treating accuracy as ground truth. A model scoring 72% on one run and 68% on the next isn’t necessarily different. Run multiple passes, report variance, and don’t draw conclusions from single-digit accuracy differences. If your dataset is small (under 200 questions per subject), confidence intervals matter more than point estimates.

Ignoring the failure mode. Accuracy tells you how often the model is right. It doesn’t tell you how it’s wrong. A model that consistently picks the same wrong answer on constitutional questions has a knowledge gap. A model that picks randomly has a comprehension problem. The fix is different. Look at the errors, not just the score.

Overfitting your own eval. If you keep iterating on prompts until a specific model scores well on your benchmark, congratulations!! you’ve done the same thing you criticized the labs for. Set the format once. Run it. Report what you find.

What I Expect to Find (WASSCE Predictions)

I’ll be upfront about my priors. These are testable hypotheses, not conclusions.

Core Maths: Models will perform reasonably well — 70-85% for the larger ones. The content is universal enough that existing training data should cover it.

English: Mid-range performance. Grammar and vocabulary will be fine. Comprehension passages with West African cultural context will cause noticeable drops.

Integrated Science: Slightly below Maths. Universal science questions will be handled. Locally contextualized ones, tropical agriculture, regional health issues will be weaker.

Social Studies: The biggest gap. I’d be surprised if any open model breaks 60% without specific fine-tuning on West African content.

If these priors hold, the implication is straightforward: open models have a regional knowledge gap, and it’s measurable. That matters for anyone building AI-powered education tools, tutoring systems, or information services for West Africa or any region underrepresented in English-language training data.

Why This Matters

The Situated Evaluation Framework is general. Any domain where public benchmarks don’t cover your use case and that’s most domains benefits from building custom evals. The methodology in this post works for medical licensing in Southeast Asia, legal reasoning in Latin America, or internal compliance assessments at your company.

But the WASSCE case study highlights something that needs to be said directly.

West Africa has one of the youngest populations on Earth. Millions of students prepare for WASSCE every year, and they’re increasingly turning to AI-powered tools for study help. If the open models behind those tools have systematic blind spots on the very content these students need to learn, that’s not a minor gap. That’s a failure mode with consequences measured in denied university admissions and misdirected study time.

MMLU told us models were getting good at Western academic knowledge. That was useful. But it was never the whole picture. The question this benchmark answers is whether progress is truly global or whether we’re building intelligence that only knows half the world.

The rule is simple: if it’s not in your eval, it doesn’t exist to your model.

Build the benchmark. Run the numbers. Make the gaps impossible to ignore.

The WASSCE benchmark dataset and eval pipeline are open source at https://github.com/acheamponge/wassce-benchmark . If you found this useful, share it with someone building AI for underrepresented communities.

About The Author

Emmanuel Acheampong is a Senior Manager at Crusoe AI and previously co-founded and led AI at yshade.ai. He took the WASSCE at Presec Legon in Accra, Ghana.